By Eleanor March, Senior Policy Analyst

You are likely facing this question in a familiar setting. A briefing pack lands on your desk ahead of a ministerial meeting. One country presents progress on labour markets, another on climate delivery, a third on health preparedness. Each delegation says its numbers support urgency, restraint, or exception. The figures look authoritative. The harder question is whether they come from a dataset that deserves your confidence.

That is what makes “what is a dataset” a policy question, not only a technical one. In government and multilateral work, a dataset is not just a spreadsheet someone emailed to your office. It is a structured collection of observations and variables, supported by definitions and metadata, that allows officials to compare like with like, trace provenance, and judge whether the evidence is fit for a public decision.

For ministers, the distinction matters. A weak dataset can distort negotiations, hide delivery failures, and create legal or reputational exposure. A strong one can align ministries, improve comparability across borders, and make international commitments easier to monitor. In other words, datasets are both strategic assets and potential liabilities.

Table of Contents

- Why Sound Data Is the Bedrock of Global Policy

- Deconstructing a Dataset The Core Components

- A Typology of Datasets for Global Analysis

- From Collection to Credibility Data Standards and Quality

- Using Datasets Concrete Examples from Global Summits

- The Legal and Ethical Dimensions of Policy Data

- Conclusion How to Integrate Data Into Your Policy Cycle

Why Sound Data Is the Bedrock of Global Policy

At a G20 summit, a minister may enter the room with a credible domestic brief and still leave with a weaker negotiating position if the underlying dataset cannot withstand comparison with those used by peers. In high-level diplomacy, the contest is rarely about whether evidence matters. It is about whether the evidence is defined consistently, updated reliably, and documented well enough to support collective action.

Policy decisions depend on structured evidence

Global policy runs on comparison. Finance ministers compare inflation and debt indicators before coordinating macroeconomic responses. Health authorities compare surveillance data before agreeing cross-border measures. Climate negotiators compare emissions baselines, sector classifications, and reporting years before judging whether commitments are credible or merely presented in compatible language.

A dataset gives that comparison a disciplined form. It turns dispersed observations into a structure that officials can test, aggregate, and challenge. Without that structure, governments are left with briefing notes, speeches, and dashboards that may be persuasive in domestic politics but weak in international scrutiny.

This is why datasets should be understood as strategic assets.

They shape resource allocation, define baselines for reform, and influence whether a country is seen as transparent and credible. The same dataset can also become a liability if its categories are poorly defined, its update cycle is unclear, or its provenance cannot be explained under pressure. For ministers, that is not a technical inconvenience. It is a governance risk.

Practical rule: Evidence becomes usable for policy only when officials can compare it, interpret it, and defend it.

Downloadable is not the same as trustworthy

Public access to a file does not settle the harder question of whether it is fit for decision-making. The Office for National Statistics emphasizes that data quality, linkage, documentation, and metadata determine whether datasets can be interpreted consistently and compared across systems, as set out in the ONS guidance on making data sources trustworthy and comparable.

That distinction matters most in cross-border settings. A dataset may look polished and still contain mismatched definitions between ministries, gaps in collection methods across regions, or category changes that make year-on-year trends unreliable. Those weaknesses distort domestic targeting. In G7 and G20 processes, they also reduce a government's ability to defend its numbers, align with common frameworks, or challenge weak claims from others.

Trustworthy datasets therefore serve two functions at once. They support better policy choices at home, and they make international cooperation more credible. A government that can explain where its data came from, how each field was defined, when it was last updated, and what its limits are enters negotiations with a stronger hand.

For that reason, datasets deserve the same scrutiny as fiscal assumptions or legal advice. Before officials rely on them, they should be able to answer four questions clearly: who produced this dataset, what exactly does each variable measure, how current is it, and is it genuinely comparable with the data used by partners?

Deconstructing a Dataset The Core Components

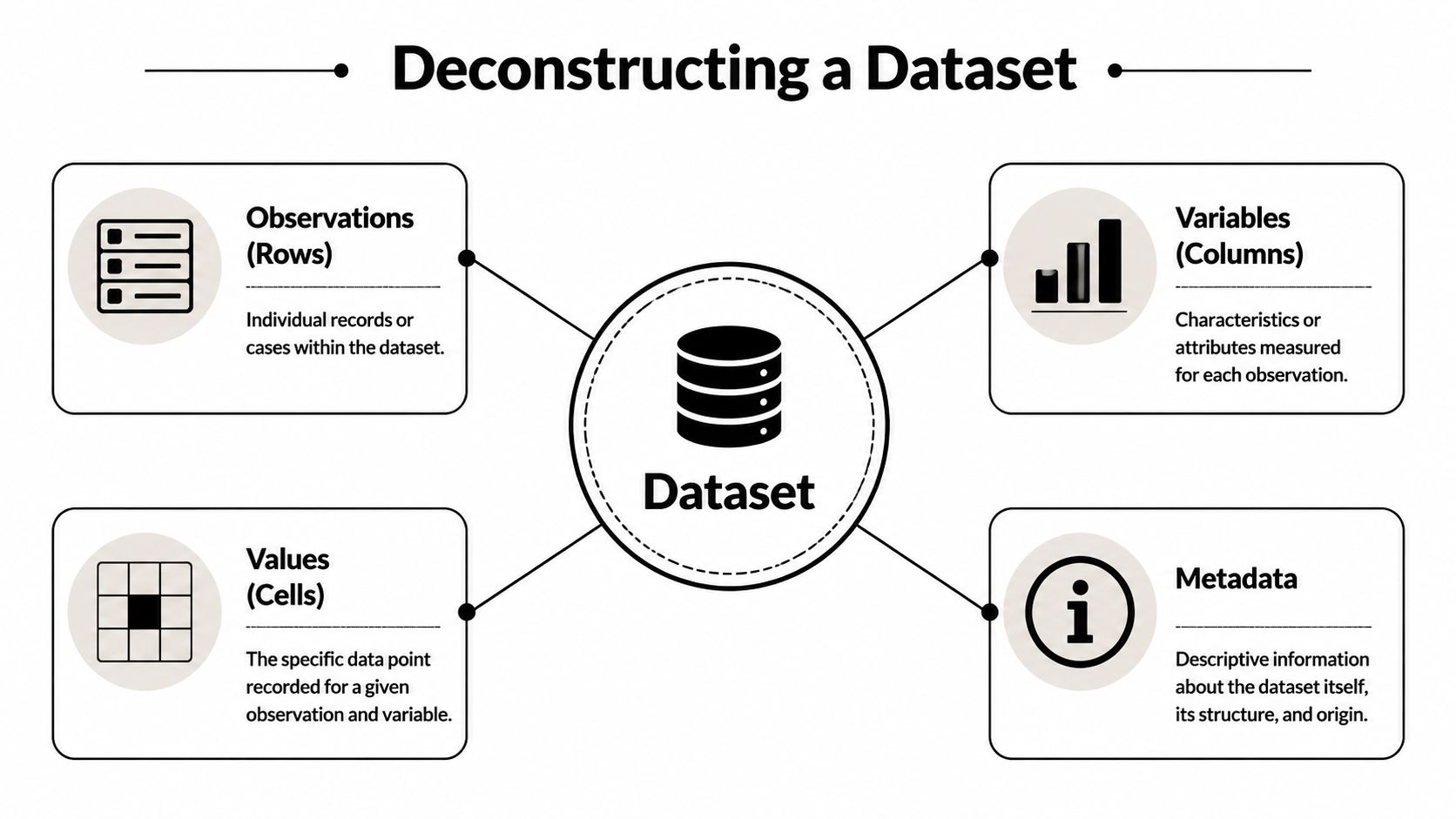

In a G20 negotiation, a minister may be handed a spreadsheet that appears straightforward and politically useful. The real test is whether the underlying structure supports comparison, scrutiny, and defence under questioning. A dataset consists of four components: observations, variables, values, and metadata.

The four components that determine whether a dataset is usable

Consider a dataset on countries' energy transition commitments.

- Observation is one record. Here, one country entry is a single observation.

- Variable is one attribute recorded across observations, such as target year, policy category, financing mechanism, or reporting ministry.

- Value is the content in a specific cell. It is the recorded entry for one variable within one observation.

- Metadata explains the dataset itself. It records who compiled it, when it was updated, how fields were defined, what methods were used, and which limitations apply.

This structure gives officials a practical way to test whether a dataset can support policy. If observations are inconsistent, the unit of analysis may shift without warning. If variables are poorly defined, countries can appear comparable when they are not. If values are missing or coded unevenly, rankings and trend lines become unstable. If metadata is weak, none of the other components can be interpreted with confidence.

The point is not technical neatness. It is decision quality.

Metadata determines whether evidence can survive political and diplomatic scrutiny

Senior officials often focus first on the values because those are what appear in charts, league tables, and briefing notes. The more consequential questions usually sit in the metadata. If a dataset records that a country has met a target, the relevant follow-up is clear: who defined compliance, against which baseline, using what reporting rules, and over what period?

Those questions matter because datasets do more than store facts. They encode institutional choices. A variable labelled "clean energy investment" may include public subsidies in one jurisdiction and exclude them in another. A field marked "implementation status" may reflect self-reporting in one case and third-party verification in another. Two cells can look equivalent while resting on different administrative practices, legal definitions, and incentives.

For ministers, that distinction has direct consequences. It affects whether a domestic programme is judged fairly, whether an international commitment can be defended, and whether a partner's claim should be accepted without challenge.

| Component | What it tells you | Why it matters in government |

|---|---|---|

| Observation | What unit is being described | Prevents mixing countries, regions, firms, or households |

| Variable | What attribute is measured | Reduces false comparison and category error |

| Value | The recorded entry | Supports analysis, modelling, and reporting |

| Metadata | Definitions, provenance, timing, method, format | Protects interpretation, accountability, and cross-country comparability |

A dataset without metadata shifts the burden of interpretation from the producer to the user. In government, that creates avoidable policy and reputational risk.

That is why policy teams should request the data dictionary as routinely as the file itself. If officials cannot state what each field measures, how it was produced, and where comparability breaks down, they are not yet ready to use the dataset in a cabinet submission, a summit briefing, or a public claim.

A Typology of Datasets for Global Analysis

A minister preparing for a G20 meeting may ask a straightforward question: is the country meeting its commitments, and how does its performance compare with peers? The answer depends less on whether data exists than on what kind of dataset is being used. A trend series can show direction. It cannot show who is excluded. An administrative register can reveal programme reach. It may fail to capture informal activity or public sentiment. In international settings, choosing the wrong dataset type can distort negotiations, misstate progress, and weaken credibility.

Dataset type determines the claims officials can defend.

For global analysis, the practical distinction is not technical complexity. It is fitness for purpose. Some datasets are designed to track change over time. Others describe populations at a single point, record the behaviour of institutions, capture self-reported experience, or attach events to location. Each carries a different mix of strengths, blind spots, and comparability risks. That matters in G7 and G20 processes, where governments often treat headline indicators as interchangeable even when they were built for different decisions.

Common Dataset Types in Policymaking

| Dataset Type | Key Characteristic | G20/Global Health Policy Example |

|---|---|---|

| Time-series | Repeated observations at consistent intervals | Tracking implementation of economic or climate commitments over time |

| Administrative | Built from operational records | Monitoring public health service use across jurisdictions |

| Survey | Collected from sampled respondents | Assessing public trust or labour market conditions |

| Geospatial | Linked to location | Mapping service gaps, exposure or regional disparities |

| Text or multimedia | Uses documents, images or audio | Reviewing policy sentiment or communication patterns |

| Topic-specific repository | Organised around a defined policy issue | Following renewable energy, trade or health financing commitments |

Time-series datasets are most useful when the policy question concerns sequence, persistence, or turning points. They support monitoring against targets and can clarify whether a reform coincided with measurable change. Their weakness is equally important. A stable national average can conceal widening regional gaps, and a rising indicator can reflect a change in reporting practice rather than a change in underlying conditions.

Administrative datasets serve a different purpose. They are generated through the normal operation of the state, in tax systems, schools, hospitals, border agencies, and social protection programmes. For ministers, their value lies in scale and operational relevance. They often provide the closest view of how policy is functioning on the ground. Yet they also reflect institutional incentives and legal design. If eligibility rules differ across countries, or if reporting is weak in lower-capacity jurisdictions, cross-country comparisons can become misleading.

Survey datasets are often the only credible way to examine experience, perception, or conditions not visible in official records. They are particularly useful when governments need to understand trust in institutions, household welfare, or labour market insecurity beyond formal employment systems. Their limits are familiar but often underappreciated in diplomacy. Results depend on sampling, questionnaire wording, timing, and response behaviour. A survey can improve policy judgment, but it should not be treated as directly comparable to administrative counts without careful adjustment.

Geospatial datasets add a layer many central governments miss. They reveal where outcomes cluster, where services fail to reach, and where climate, health, or infrastructure risks overlap. In multinational initiatives, location-based analysis can expose why a national commitment is being met on paper but not in border regions, secondary cities, or fragile areas. For ministers managing territorial inequality, this type of dataset often changes the policy response from general funding increases to targeted intervention.

Text and multimedia datasets have become more relevant in summit preparation and strategic communications. They can be used to analyse speeches, regulatory documents, media narratives, and public messaging across countries. Their policy value lies in identifying framing, commitments, and divergence in official positions. Their risk is interpretive overreach. Classification methods, translation choices, and training data can shape conclusions in ways that are less visible than in a spreadsheet.

Topic-specific repositories are often the starting point for international cooperation because they assemble material from multiple producers around one issue area, such as emissions reporting, pandemic surveillance, or development finance. They save time and support coordination. They also concentrate upstream weaknesses. If source systems use inconsistent definitions or verification methods, the repository can spread comparability problems at scale rather than solve them.

The strategic lesson for government is straightforward. The right dataset is the one that matches the decision, the accountability requirement, and the level of international scrutiny. If the question is whether implementation is on schedule, start with time-series evidence. If the question is who is missing out, examine administrative and geospatial records. If the question is how policies are experienced by citizens or firms, survey evidence may carry more weight than internal reporting.

Capable ministries classify datasets before they compare them. That discipline improves analysis. It also protects negotiating positions, budget choices, and public trust.

From Collection to Credibility Data Standards and Quality

At 2 a.m., before a leaders' communiqué is finalised, a minister may be asked a simple question with expensive consequences: can this number be defended in front of peers, markets, and the public? At that point, a dataset is no longer just a technical input. It is part of the state's credibility.

Quality is a governance issue

For government, data quality is inseparable from administrative discipline and institutional trust. Provenance matters. So do completeness, consistency, timeliness, metadata, and version control. A dataset can look orderly in a spreadsheet and still fail under scrutiny if officials cannot show who produced it, under which definition, and with what revision process.

International settings raise the bar further. G7 and G20 discussions depend on evidence that can survive comparison across jurisdictions with different legal systems, reporting capacities, and political incentives. Standards such as FAIR help because they convert broad quality ambitions into operational tests. Can the right officials find the dataset quickly? Can they access it under clear conditions? Can it work with other systems? Can another institution reuse it without guessing what each field means?

These are not filing problems. They affect whether a treasury can validate a health ministry estimate, whether a regulator can reproduce an emissions figure, and whether a partner country accepts a national submission as comparable to its own. The same logic underpins turning data into decisive action on disaster resilience for the G20, where delays or inconsistencies in underlying records can weaken early action.

A technically correct dataset can still be unfit for purpose

Fitness for purpose is the test that often gets missed. A dataset may be accurately assembled and still mislead decision-makers if it excludes the populations, territories, or time periods that matter most to the policy question.

The Health Equity Tracker methodology makes this point clearly. Small-area health and deprivation data can be affected by missingness, suppression, and uneven coverage, which limits confident comparison across places, as explained in the Health Equity Tracker discussion of dataset limitations.

Policy errors often begin with false confidence in the data.

If coverage is uneven, the problem is not only analytical. It becomes political, because underserved groups disappear from the evidence base that shapes allocation.

For ministers and senior officials, four questions usually separate a usable dataset from a risky one:

- Who collected it, and for what purpose? A compliance dataset may be poorly suited to evaluating welfare outcomes or distributional effects.

- What is missing? Blank fields, suppressed categories, informal settlements, fragile regions, and inconsistent territorial coverage can all distort allocation decisions.

- Is it current enough for the decision? A clean historical series may still be too slow for crisis management, sanctions monitoring, or outbreak response.

- Will another institution interpret it the same way? If definitions, units, or classifications differ, comparability breaks down during negotiation.

Strong policy teams treat these checks as routine challenge functions, not optional technical reviews. That discipline protects budgets, strengthens negotiating positions, and reduces the risk of defending figures that cannot withstand external verification.

For teams building capability, this short explainer offers a useful complement:

Using Datasets Concrete Examples from Global Summits

At summit level, datasets matter because they narrow the space for argument. They do not remove politics, but they make positions more testable.

Climate negotiations and implementation tracking

When leaders discuss emissions pathways or energy transition commitments, they rarely rely on one table alone. They work across time-series records, topic-specific reporting systems and model outputs. The practical contribution of the dataset is to establish a common reference point. Without that, governments end up negotiating from competing narratives rather than shared evidence.

This is why climate diplomacy depends so heavily on datasets that are structured for comparison across countries and across time. A target means little if baseline years, sector coverage, and update schedules are unclear.

Global health and cross-ministerial coordination

Health governance often exposes the difference between a data collection exercise and a dataset fit for decision-making. During preparedness discussions, finance and health officials need records that are interoperable enough to connect service delivery, surveillance and resource planning. When those systems do not align, governments can see the same event through incompatible lenses.

A useful related perspective appears in this analysis of turning data into decisive action on disaster resilience. The central lesson applies beyond disasters. Early warning only works when datasets are timely, legible, and linked to a decision process.

Development finance and accountability

Development institutions routinely use datasets to compare needs, assess performance and review whether commitments are translating into outcomes. The ministerial value lies not only in the existence of a dataset, but in the repeated scrutiny over time enabled by its consistent structure.

A good dataset creates disciplined disagreement. Officials may still dispute priorities, but they are forced to confront the same definitions, records and limits.

That is one reason datasets have become central to international cooperation. They make it harder to hide behind anecdote, and easier to ask whether delivery matches declaration.

The Legal and Ethical Dimensions of Policy Data

A dataset can be analytically elegant and still be unacceptable to use. That is the part many technical explainers miss. In public policy, legality and legitimacy shape whether a dataset should exist in its current form at all.

Purpose should govern design

In UK data governance, a dataset's technical design must reflect legal principles. The Information Commissioner's Office emphasises data minimisation, meaning datasets for public use should limit fields to what is necessary for a clear purpose, while preserving provenance and metadata for accountability so organisations can avoid compliance drift or re-identification risks, as summarised in the NNLM glossary entry discussing dataset governance and ICO principles.

This has direct operational consequences. If a ministry keeps fields “just in case”, it may create exposure with no policy value. If it strips away provenance and retention controls, it may create the opposite problem: a dataset that cannot be audited when challenged.

Ethics begins where technical adequacy ends

Officials often ask, “Can we link these records?” The harder question is whether they should. Cross-border data flows, welfare analytics, health surveillance and justice systems all raise risks that cannot be solved by cleaner coding alone.

A useful discussion of why health data governance is broader than specialist practice appears in this article on why health data is everyone's business. The wider lesson is that public trust depends on visible restraint. Citizens are more likely to accept legitimate data use when governments can explain purpose, necessity, safeguards and accountability.

For ministers, the ethical checklist is short but demanding:

- Purpose limitation: use data for the defined public objective, not for open-ended convenience.

- Data minimisation: collect and retain only what the task requires.

- Accountability: keep metadata, provenance and governance records intact.

- Non-discrimination: test whether the dataset could reproduce exclusion or skewed targeting.

The strongest policy datasets do not only support insight. They also withstand scrutiny.

Conclusion How to Integrate Data Into Your Policy Cycle

A dataset is not a machine for producing truth. It is a structured instrument that can support judgement, provided leaders ask disciplined questions before they rely on it.

A minister's working checklist

When a dataset appears in a briefing, use this sequence.

- Check origin and purpose. Who assembled it, for what institutional reason, and under what mandate?

- Test quality and completeness. What is missing, uneven, outdated or suppressed?

- Verify comparability. Are the variables, classifications and reporting rules aligned with those used elsewhere?

- Review consequences. Could its use create legal, ethical or distributive harm?

This checklist changes the quality of policy discussion. It moves debate away from headline figures and towards evidentiary discipline. That is where better decisions usually begin.

Use data as part of the policy cycle, not only at the end

Too many governments bring datasets in late, after policy choices have largely been made. Stronger practice uses them earlier. They should inform problem definition, shape options, support negotiation, and then monitor implementation. That is how datasets become part of governance rather than decoration around it.

There is also a practical compliance benefit. When ministries define variables, metadata and decision rights early, they reduce later disputes over whether delivery can be measured fairly. For an applied example of that link between evidence and accountability, see this piece on using data to improve compliance.

Ministers don't need to become data scientists. They do need to become demanding consumers of datasets.

That is the essential answer to “what is a dataset”. In public leadership, it is a test of administrative seriousness. It shows whether a government can define what it is measuring, compare itself with others, explain its choices, and correct course when evidence shifts.

If you want more policy analysis grounded in practical governance questions, explore Global Governance Media for data-led coverage of summits, compliance, public health, climate and multilateral decision-making.