By Eleanor Hart, Senior Policy Advisor

What exactly are ministers endorsing when they praise tech for good. A sentiment, a sector, or a governance agenda?

That question matters because the gap in current debate isn’t enthusiasm. It’s discipline. Governments, multilateral bodies and firms increasingly use the phrase to signal ethical intent, yet too few policy frameworks distinguish between pilot projects that look constructive and systems that can be financed, regulated and judged against public outcomes. For G7 and G20 leaders, that distinction is no longer academic. It determines whether technology becomes a dependable instrument of resilience, inclusion and decarbonisation, or remains a loose collection of well-branded experiments.

Table of Contents

- Defining Tech for Good Beyond the Buzzword

- The Evolution from Digital Aid to Systemic Impact

- Analysing Key Governance and Regulatory Hurdles

- Illustrative Case Studies for G20 Inspiration

- The Unique Governance Imperative of Artificial Intelligence

- A Measurable Policy Framework for G7 and G20 Action

- Conclusion: From Aspiration to Implementation

- Frequently Asked Questions

Defining Tech for Good Beyond the Buzzword

Tech for good should be understood as technology that is intentionally designed, funded and governed to achieve public-interest outcomes at scale. That is a stricter definition than the one often used in conferences and company reports. It excludes the comforting assumption that any digital product with a social story automatically qualifies.

The economic stakes are already substantial. Global investment into tech for good sectors such as health tech, edtech and cleantech reached $79 billion in 2021, yet policy frameworks still lag in steering that capital towards measurable social outcomes, as noted by the World Economic Forum’s analysis of the meaning and delivery challenge of tech for good. For ministers, that creates a familiar governance problem. Capital is moving faster than accountability.

Public value is the test

A workable definition needs three filters:

- Intent matters: A project should target a public policy objective such as resilience, inclusion, health access or emissions reduction.

- Governance matters: Someone must be responsible for standards on safety, procurement, interoperability and oversight.

- Evidence matters: Claims of benefit should be tied to observable outcomes, not brand positioning.

Without those filters, tech for good becomes too elastic to govern. It can describe a disaster warning platform, a digital identity scheme, a carbon removal facility, or a consumer app with vague social aspirations. Those are not the same category from a regulatory perspective.

Practical rule: Ministers should treat tech for good as a governance class, not a marketing class.

That distinction has implications for G20 agendas. If leaders want technology to advance resilience and equity, they need to embed it within finance ministries, regulators, procurement systems and cross-border coordination forums. That also means moving beyond digital access alone toward institutional capacity and outcome tracking, a challenge that sits alongside broader questions of inclusion in the digital era.

Fragmented goodwill isn’t enough

Many initiatives are sincere. Fewer are durable. The strategic question for governments isn’t whether promising tools exist. It’s whether public institutions can identify which tools deserve long-term support, which require tighter safeguards, and which shouldn’t be scaled at all.

That’s why tech for good belongs on the G7 and G20 agenda as a matter of state capability. The issue isn’t technology alone. It’s whether governments can govern technological systems in line with public purpose.

The Evolution from Digital Aid to Systemic Impact

The current debate didn’t begin with AI. It began with a simpler proposition. If digital tools could improve communication and access, perhaps they could also narrow development gaps. Early efforts were heavily shaped by a delivery mindset. Put devices, connectivity and basic software into underserved settings, then expect social gains to follow.

That earlier phase mattered because it established an important norm. Technology could be directed towards social ends, not just commercial ones. But it also carried a hidden assumption. Access itself was often treated as the outcome, rather than as the starting condition for institutional change.

From access to capability

Over time, the field widened. Social enterprises, open-source communities and mission-led technologists pushed beyond simple access models. The focus shifted toward practical public problems such as health information, remote learning, service delivery and climate adaptation. The philosophical change was significant. Technology was no longer just a donated input. It became part of how organisations designed services and exercised public authority.

Three shifts defined that evolution:

- From hardware to systems: Policymakers became less interested in devices alone and more interested in data flows, platforms and service integration.

- From charity to design: The strongest initiatives stopped treating underserved communities as passive recipients and started treating them as users of systems that needed to work reliably.

- From isolated apps to institutional ecosystems: Public value increasingly depended on standards, procurement rules, interoperable data and long-term operators.

The lesson from earlier digital aid efforts is straightforward. Technology succeeds when institutions can absorb it, govern it and improve it over time.

Why the older model no longer fits

That historical arc explains why current governance can’t rely on legacy assumptions. Today’s interventions often involve machine learning, sensor networks, automated alerts and cross-border datasets. These are not one-off donations. They are living systems with operational, legal and ethical consequences.

A flood prediction model, for example, depends on data quality, model governance, alert protocols and public trust. A climate technology project depends on verification, industrial regulation and market design. A social inclusion platform depends on privacy safeguards and meaningful impact reporting. The complexity has changed the unit of analysis. Ministers can’t evaluate these efforts as standalone products.

The deeper point is that tech for good has matured from a development niche into a statecraft issue. Once technology shapes public warning systems, emissions pathways, social access or eligibility decisions, ministries need stronger tools than goodwill and pilot grants. They need rules, financing architecture and evidence discipline.

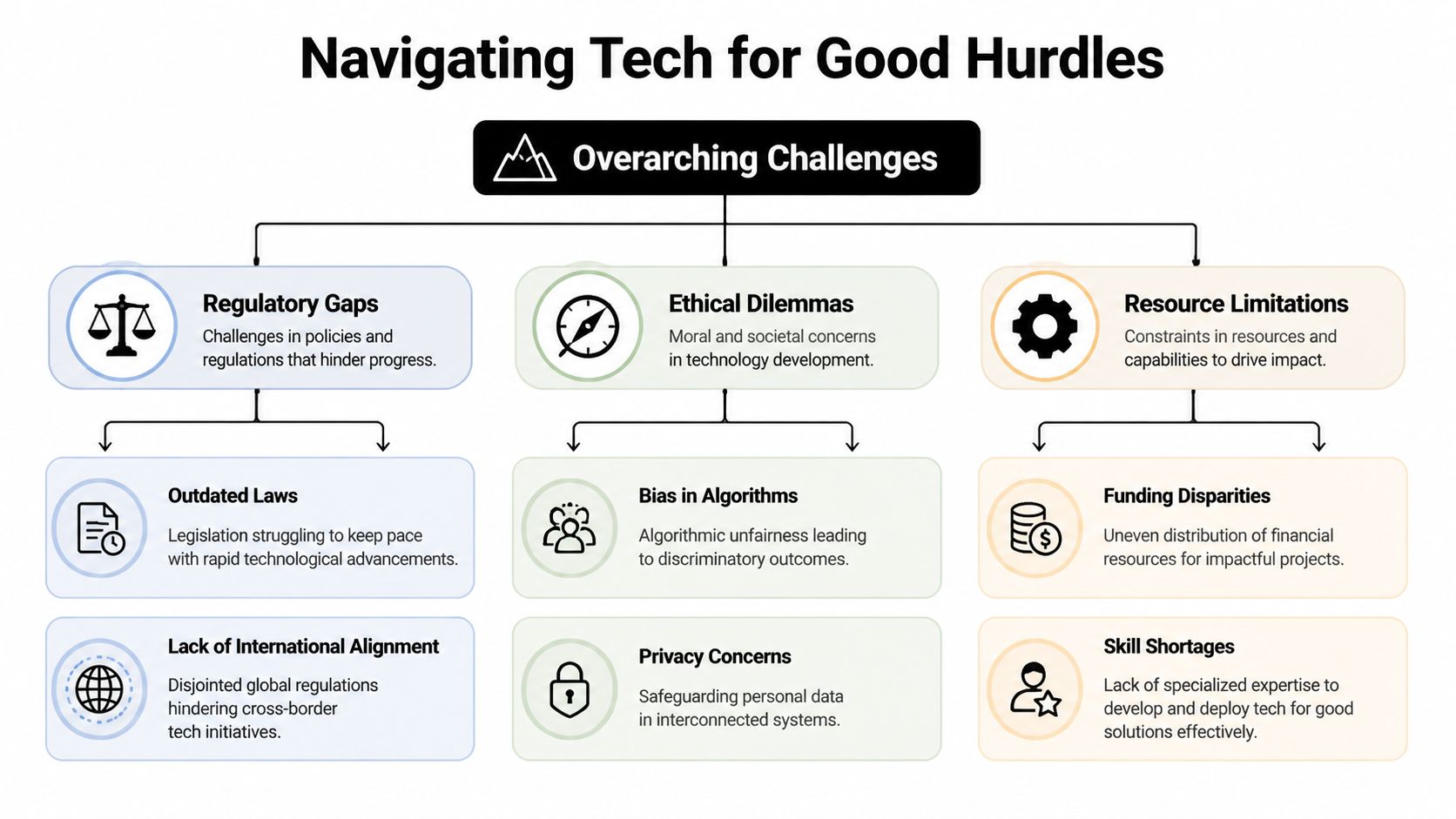

Analysing Key Governance and Regulatory Hurdles

The biggest barrier to tech for good isn’t a shortage of ideas. It’s a shortage of governable pathways to scale.

Many promising interventions stall in the same place. They attract initial enthusiasm, demonstrate technical promise, and then struggle to survive once grant cycles end or procurement systems fail to adapt. That pattern is not accidental. It reflects structural weaknesses in how governments and partners finance, regulate and supervise public-interest technology.

Financing remains the weak link

One of the least discussed obstacles is sustainability. A critical underserved angle is the financing and sustainability gap. Many non-profits and community-focused organisations deliver digital access and social technology initiatives, yet there is little policy focus on the structural funding models and public-private partnerships needed to sustain them beyond pilots, as highlighted in Mobile Citizen’s review of digital access initiatives.

That has serious implications for ministers. If financing depends on short-term philanthropy or one-off corporate support, governments can’t plan around continuity, service standards or national replication. The result is a credibility problem. Communities experience technology as temporary, while ministries struggle to justify integration into mainstream programmes.

Regulation is still nationally fragmented

The second hurdle is jurisdictional patchiness. Social problems often cross borders, but most digital regulation remains national, sectoral and unevenly enforced. That makes collaboration harder in areas such as disaster risk reduction, cross-border data use and shared technical standards.

For G20 members, the governance implication is clear. Ministers don’t need uniform regulation in every area, but they do need common operating assumptions on interoperability, auditability and public-interest exemptions. Without that, good solutions remain trapped in local silos.

Ethical risk is operational risk

Ethical concerns are often discussed as abstract principles. In practice, they are operational issues.

- Privacy failures can deter vulnerable populations from using public-interest platforms.

- Biased algorithms can distort who receives support, warnings or scrutiny.

- Opaque decision systems can prevent administrators from correcting harmful outputs.

A technology that cannot explain its public consequences is not ready for public scale.

These risks are especially acute when social interventions target the very populations with the least capacity to challenge errors. Ministers should therefore stop separating ethics from implementation. In tech for good, ethics is part of implementation quality.

The missing ministerial question

Too many proposals still receive support because the technology is impressive. The better question is tougher. Who pays when the pilot ends, who regulates when the model fails, and who is accountable when harm is distributed unevenly. Until those questions are answered at the start, scale will remain the exception rather than the rule.

Illustrative Case Studies for G20 Inspiration

The most useful examples of tech for good are not the most glamorous ones. They are the ones that show a clear line between technical design, public authority and measurable policy value.

Climate resilience as public infrastructure

In the UK, AI-driven satellite imagery and machine learning models reduced flood prediction errors by up to 40% in high-risk areas, showing a direct cause-and-effect link between technological capability and public resilience. The same deployment combined Sentinel-1 radar data with convolutional neural networks trained on historical flood data, triggered automated alerts to local authorities within minutes of extreme rainfall thresholds, and supported response improvements validated after the 2024 winter storms.

The evidence is unusually policy-relevant because it doesn’t stop at technical novelty. It links predictive accuracy to public action. The system reportedly enabled proactive evacuations, reduced response times, and supported estimated property damage savings during those storms. It also points towards national scaling through the UK’s Flood Innovation Programme and the use of interoperability standards that can connect warning systems across borders.

For G20 ministers, the lesson is not merely that AI can help with flood management. It's that tech for good works best when it is embedded inside operational chains of command. Data collection, model inference, alert thresholds and local authority action all need to line up.

What this case makes visible

- Public agencies remain central: Satellite data and machine learning only create value when ministries, weather services and local authorities act on them.

- Standards enable cooperation: Interoperability matters because resilience systems rarely stop at administrative borders.

- APIs can be policy tools: When actionable interfaces are designed for public authorities, technology becomes easier to integrate into daily operations.

A minister evaluating this kind of model should ask whether the technology improves a whole public workflow, not merely a prediction score.

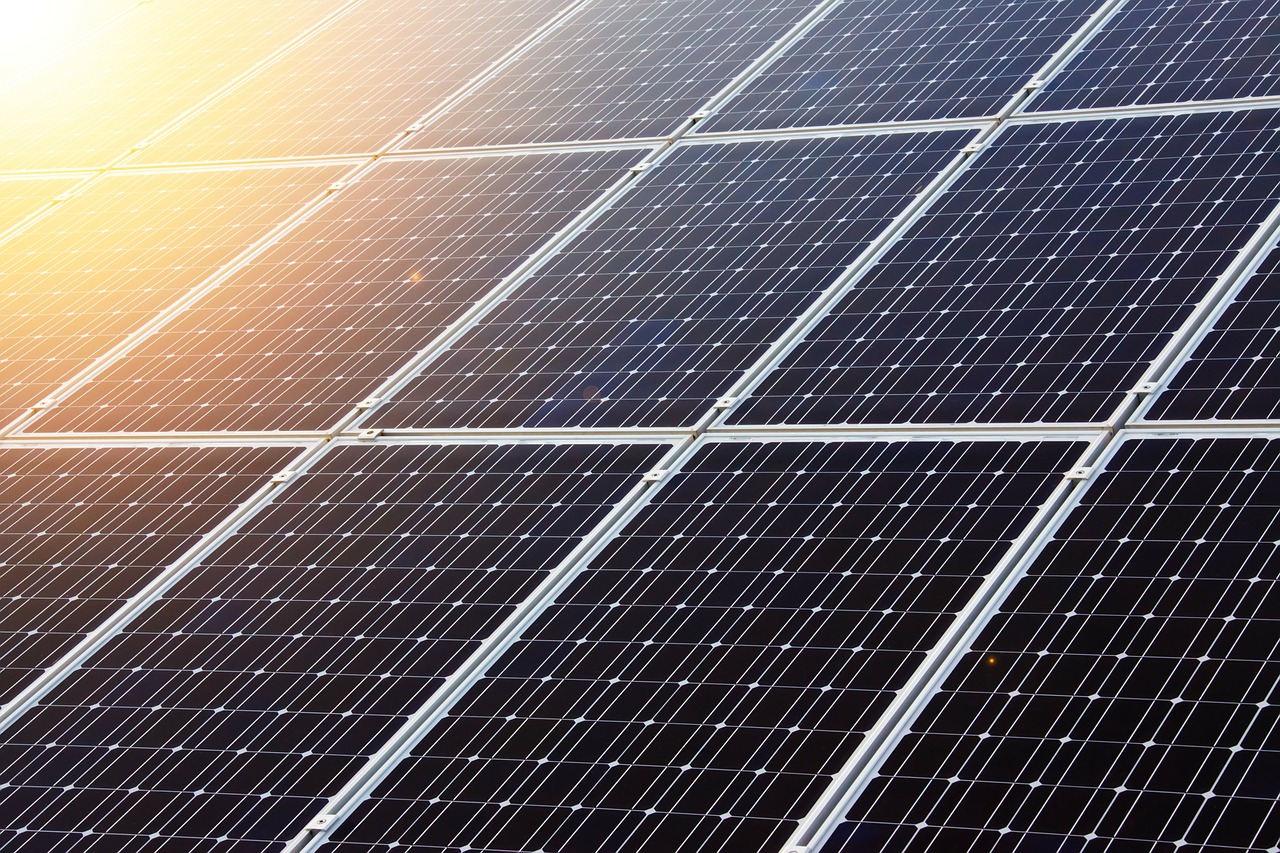

Negative emissions and industrial credibility

A different but equally instructive case comes from UK clean technology. Drax Group’s BECCS facility in Selby, North Yorkshire, demonstrates how industrial technology can be framed as tech for good when it combines verification, climate policy alignment and practical scalability. According to the verified data, the facility captures 1 tonne of CO2 per tonne of sustainable biomass processed, contributes 4 MtCO2/year towards the UK’s net-zero pathway, and shows 98% capture efficiency at 840 MW capacity. The system uses amine-based post-combustion capture with 30% monoethanolamine solvent in a 16MWth demonstration plant, while maintaining an energy penalty below 10% of gross output through steam integration at 3.5 MJ/kg CO2 captured.

For global governance, the project demonstrates a negative emissions factor of -0.8 tCO2/MWh. Verified data also notes that BECCS deployment displaced 2.5 MtCO2 from unabated coal and gas since 2023, supported 1,200 green jobs in deprived regions, and secured £420 million in G7 Just Energy Transition Partnership funding. The same evidence suggests that scaling to 10GW by 2030 could offset 8% of UK emissions, with modular designs deployable in 24 months.

Climate technology gains governance relevance, rather than remaining merely technical. Negative emissions claims are politically sensitive. They require verification, registry compatibility and regulatory legitimacy. The significance of this case is that it links engineering performance to tradable, policy-recognised outcomes through UK ETS integration.

A short explainer is useful here:

Why this matters to G20 governments

| Policy concern | Governance implication |

|---|---|

| Industrial decarbonisation | Tech for good can include heavy infrastructure, not only apps and platforms |

| Carbon market integrity | Verifiable performance is essential for public legitimacy |

| Regional development | Climate technology gains political durability when it supports jobs and place-based transition |

Inclusive growth needs a tougher evidence standard

The third case is more notable for what it lacks. Across inclusion-focused technology initiatives, current coverage still doesn’t provide rigorous evidence on employment outcomes, income growth or long-term social mobility effects. That gap is especially important for ministers because inclusion is often the most rhetorically celebrated category of tech for good.

The implication is uncomfortable but useful. G20 governments should resist the temptation to treat visibility as proof of impact. If a programme aims to improve opportunity for excluded groups, it should be judged against outcomes that matter to those groups, not only against participation or platform usage.

Good intentions are common in inclusive technology. Comparable outcome measures are not.

That is why the next phase of policy has to be less promotional and more forensic.

The Unique Governance Imperative of Artificial Intelligence

Artificial intelligence shouldn’t be treated as just another tool inside the wider tech for good portfolio. It changes the governance problem because it can automate judgement, shape information environments and scale errors faster than conventional digital systems.

AI is not just another delivery tool

A digital payment platform processes transactions. An AI-enabled public system may classify, rank, predict or recommend. That difference matters. Once governments or public-interest organisations rely on automated outputs, they are no longer just digitising a service. They are delegating part of decision formation.

The implications extend well beyond efficiency. In social policy, an AI system can influence who receives attention, how fast warnings are sent, which communities are flagged as high risk, or which narratives dominate an information environment. That creates a higher threshold for audit, explainability and contestability.

A useful comparison comes from climate technology. The verified evidence on the UK-based BECCS facility shows a negative emissions factor of -0.8 tCO2/MWh, offering a verifiable route for G20 countries to align technology deployment with net-zero goals. AI governance should learn from that logic. Public legitimacy grows when claims can be tested, verified and tied to recognised standards, a principle also central to current debates on governing AI in society 5.0.

Governance must move upstream

Many governments still focus on downstream harms after deployment. That’s too late for high-impact AI systems. Governance has to move upstream into procurement, training data expectations, model documentation and deployment conditions.

Ministers should insist on four disciplines:

- Pre-deployment scrutiny: Public bodies need to know what task the model performs and what failure looks like.

- Human override: Officials must be able to review, challenge and reverse harmful outputs.

- Use limitation: A model approved for one social purpose shouldn’t automatically migrate into another.

- Cross-border coordination: Shared principles matter because vendors and models often operate across multiple jurisdictions.

AI requires a dedicated governance track because its failures can look authoritative even when they are wrong.

Why multilateral forums matter

No single G20 country can fully govern AI-enabled public-interest systems in isolation. Technical supply chains, cloud infrastructure, model providers and information risks all cross borders. That means the right venue for baseline norms is international, even when implementation remains domestic.

The core challenge for ministers is to preserve AI’s problem-solving value without importing a false sense of neutrality into public decision-making. AI can support the public good. But only if governments govern it as a system of power, not merely as software.

A Measurable Policy Framework for G7 and G20 Action

The policy failure in tech for good is not only underinvestment or uneven regulation. It is the absence of a shared measurement discipline.

Current coverage often celebrates interventions without proving whether they improve people’s life chances. That gap is especially stark in social inclusion. As noted in CIO’s discussion of professional organisations focused on diversity in tech, there is no standardised tracking to show whether many technology tools reduce entrenched inequalities, creating an accountability vacuum for policymakers.

What ministers should measure

A stronger framework starts by separating activity metrics from outcome metrics. Activity tells you that a programme existed. Outcome tells you whether it changed anything that matters.

Ministers should require every publicly backed tech for good initiative to report across four layers:

Problem definition

The sponsoring institution should state the public harm or gap being addressed in plain language.Operational performance

This includes whether the system functions reliably inside real institutions, not only in controlled tests.User protection

Ministries should track privacy safeguards, grievance processes and the ability to contest harmful decisions.Socioeconomic effect

The hardest but most important layer. If the claim is inclusion, resilience or mobility, reporting should connect to those outcomes over time.

The importance of political discipline becomes clear. Governments often ask too little of social technology because they fear discouraging innovation. The opposite is true. Clearer measurement makes serious innovation easier to identify and support.

Ministerial test: If a programme cannot explain how public value will be measured, it is not ready for public scale.

A practical starting point would be to align reporting requirements with procurement and summit tracking processes. That would allow governments to compare interventions across sectors and improve follow-through, which is especially relevant to wider debates on predicting compliance with G20 commitments.

G20 Policy Framework for Tech for Good

| Policy Domain | Recommendation | Key Performance Indicator (KPI) |

|---|---|---|

| Public-interest definition | Require funded projects to specify the policy objective, target population and theory of change before launch | Share of funded projects with published outcome framework |

| Impact accountability | Create a common reporting template for operational, ethical and socioeconomic outcomes | Proportion of projects reporting against a standardised template |

| Sustainable finance | Establish blended finance vehicles that combine public funding with mission-aligned private capital for long-term delivery | Number of initiatives with multi-year financing arrangements |

| Procurement reform | Update procurement rules so ministries can commission interoperable, auditable social technology rather than one-off pilots | Share of procurements requiring interoperability and audit provisions |

| Regulatory experimentation | Use controlled sandboxes for high-potential public-interest technologies in areas such as resilience and inclusion | Number of sandbox-tested solutions that proceed to formal adoption |

| Data governance | Mandate clear data stewardship, consent rules and redress pathways for vulnerable users | Percentage of programmes with published data governance and complaints mechanisms |

| Cross-border coordination | Develop G20 guidance on minimum standards for public-interest AI, resilience platforms and shared evaluation methods | Adoption of common guidance across participating governments |

| Exit and continuity planning | Require projects to specify who operates, maintains or retires systems after the pilot phase | Share of initiatives with approved continuity or decommissioning plans |

This framework does not require perfect consensus. It requires enough common structure that ministers can compare initiatives, identify failure early and scale what works responsibly.

What this changes in practice

The immediate effect would be a shift in incentives. Project sponsors would know they must demonstrate public value, not only technical sophistication. Ministries would gain a clearer basis for funding decisions. Private partners would face stronger expectations on accountability and durability.

That is the missing bridge between political aspiration and administrative reality.

Conclusion: From Aspiration to Implementation

Tech for good has reached a point where optimism alone is no longer useful. The concept now sits at the intersection of industrial policy, resilience planning, digital regulation and social legitimacy. That gives G7 and G20 ministers a sharper responsibility. They must decide which technologies deserve public backing, under what conditions, and according to which standards of proof.

The examples are instructive. Some technologies already show what disciplined alignment can achieve in climate resilience and emissions reduction. Yet the broader field still suffers from two weaknesses that policymakers can no longer ignore. Too many initiatives lack durable financing. Too many claims of impact remain weakly measured.

The real policy shift

The deeper change required is conceptual. Governments should stop treating tech for good as a catalogue of admirable projects and start treating it as a governance portfolio. That means applying the same seriousness that ministers bring to energy security, public finance or health systems.

A mature approach would include:

- Clear definitions of public-interest purpose

- Stable financing structures beyond pilot logic

- Outcome-based accountability rather than narrative-based legitimacy

- Dedicated AI governance where automated systems shape public decisions

- Cross-border standards that make cooperation feasible

The central question for the next summit cycle is not whether technology can support the public good. It is whether governments are prepared to govern that support credibly.

If they are, tech for good can become a practical instrument of multilateral problem-solving. If they are not, the phrase will continue to expand while its policy value thins out. G20 leaders should use the next round of ministerial work to adopt measurable reporting norms, build sustainable financing channels and create common expectations for high-impact systems. That is how conversations become commitments, and commitments become durable public results.

Frequently Asked Questions

What should ministers ask before funding a tech for good initiative

They should ask five questions. What public problem is being addressed. Who is accountable for delivery. How the initiative will be financed after the pilot period. What harms could arise for users. How success will be measured in real-world outcomes rather than usage alone.

Who should lead a national tech for good agenda

No single ministry can do it alone. Finance ministries, digital regulators, sector ministries and procurement authorities all need a role. The strongest model is usually a cross-government arrangement with clear ownership of standards, funding and evaluation.

How can governments support innovation without lowering safeguards

By separating experimentation from exemption. Regulatory sandboxes can allow supervised testing, but they should still require transparency, user protection and review mechanisms. Innovation becomes more credible when rules are clear.

Why is impact accountability so difficult in this field

Because many initiatives measure what is easy to count rather than what matters. Access, sign-ups and participation are simpler to report than resilience, mobility or inclusion. Governments need common templates and longer-term evaluation expectations if they want better evidence.

Where does AI fit within tech for good policy

AI should sit within the broader agenda but with a dedicated governance track. Its capacity to automate judgement and shape public decisions means it needs stronger scrutiny than ordinary digital tools.

Global Governance Media helps decision-makers turn complex summit agendas into practical policy choices. Explore more analysis, interviews and forward-looking briefings at Global Governance Media to strengthen your next G7 or G20 strategy with clearer evidence, sharper governance thinking and actionable international context.